|

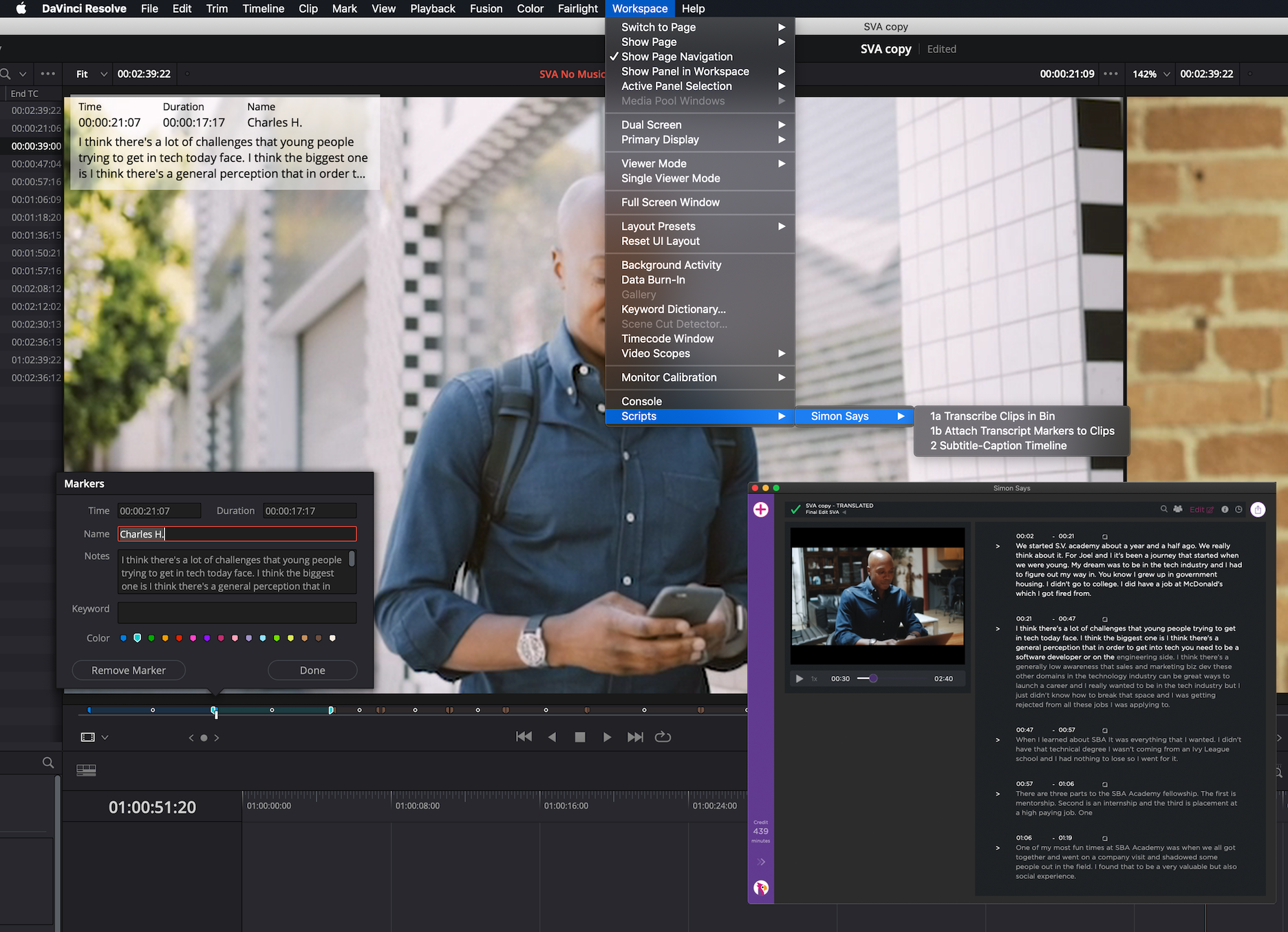

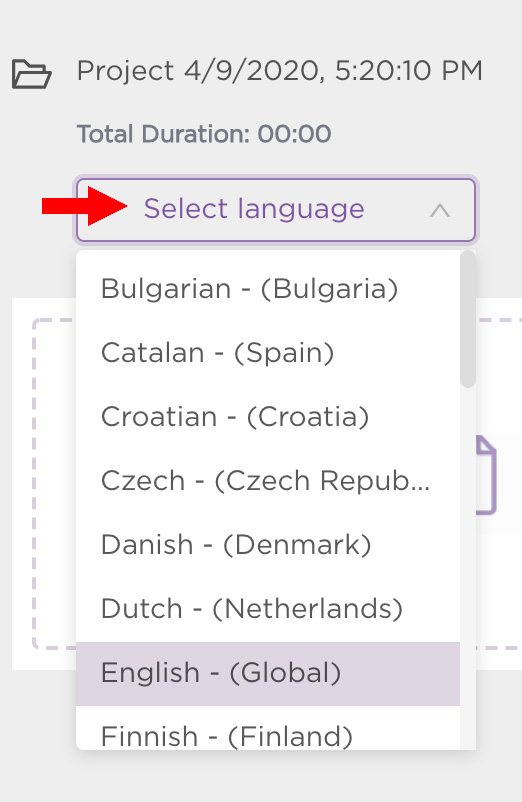

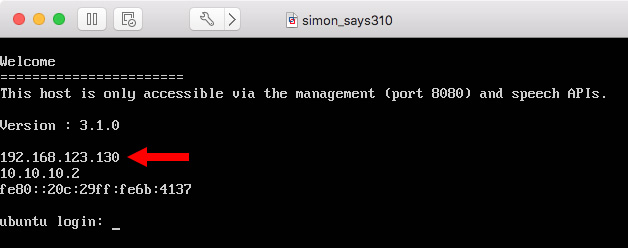

Collaborators can then comment on what has been done, or clients can sign off on things. Once you’ve highlighted all the important bits of the footage or interview you want to isolate, it’s then possible to drag and rearrange them, effectively creating your story structure there and then. Once the transcription is complete you can then read through it and then highlight blocks of text and assign them as individual soundbites. The really clever bit is when it comes to manipulating the transcription. These are then transcribed by the Simon Says service giving you a full record of what is said in the video clips. You upload the footage to Simon Says using the web, Mac app, or Adobe or FCP extensions. It’s almost taking the idea of a service like Frame.io, which allows comments from clients, but actually allows collaborators to make physical changes to the edit flow. Written text on the other hand is visual represents spoken audio very well, and it is this concept that makes this new announcement from Simon Says quite compelling.

That’s because audio is, well, audio, and you can’t hear it unless the video is playing. It can be a laborious process, and ironically even though it is video, it isn’t very visually intuitive. Imagine you have a long series of interviews to sort through and find the best parts. Simon Says is a timecode-based AI transcription platform, and now it is bringing a new service, Assemble, to the table, allowing fast remote collaboration on edits with simple text editing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed